Improving Library User Experience with A/B Testing: Principles and Process

Skip other details (including permanent urls, DOI, citation information)

: This work is licensed under a Creative Commons Attribution 3.0 License. Please contact mpub-help@umich.edu to use this work in a way not covered by the license.

For more information, read Michigan Publishing's access and usage policy.

This paper was refereed by Weave's peer reviewers.

Abstract

This paper demonstrates how user interactions can be measured and evaluated with A/B testing, a user experience research methodology. A/B testing entails a process of controlled experimentation whereby different variations of a product or service are served randomly to users in order to determine the highest performing variation. This paper describes the principles of A/B testing and details a practical web-based application in an academic library. Data collected and analyzed through this A/B testing process allowed the library to initiate user-centered website changes that resulted in increased website engagement and improved user experience. A/B testing is presented as an integral component of a library user experience research program for its ability to provide quantitative user insights into known UX problems.

Introduction

Through the process of A/B testing, user experience (UX) researchers can make iterative user-centered, data-driven decisions for library products and services. The “A/B test” and its extension “A/B/n test” represent shorthand notation for a simple controlled experiment, in which users are randomly served one of two or more variations of a product or service: control (A), variation (B), and any number of additional variations (n), where the variations feature a single isolated design variable. With proper experiment design, the highest performing variation can be identified according to predefined metrics (Kohavi, 2007). Within a diverse framework of UX research methodologies, A/B testing offers a productive technique for answering UX questions.

A/B testing has been a tool of user interface designers and user experience researchers since at least 1960, when engineers at Bell Systems experimented with several different versions of the touch-tone phone (Deininger, 1960). The Bell Systems experiment was framed by a user-centered research question: "What are the desirable characteristics of pushbuttons for use in telephone sets?" UX issues such as button size, button arrangement, lettering, button force, and button feedback were tested with Bell Systems employees, who were then asked which of each variation was preferred most and least. In designing this experiment, Deininger notes, “Perhaps the most important factor in the information processing is the individual himself” (p. 1009). Today major companies such as Google, Amazon, Etsy, and Twitter frequently use experiments to make data-driven design decisions.[1] In accordance with Google's company philosophy, engineers use experimentation to understand ever-changing user expectations and behavior (Tang, 2010, p. 23).[2] For Google, "experimentation is practically a mantra" (Tang, 2010, p.17).

This approach is widely used because the data derived from A/B testing provides direct evidence of user behavior in support of the concept known as “perpetual beta.” In the pursuit of perpetual beta, a product or service remains under regular review, with feedback from users cycling through an iterative design process that continually seeks to improve the UX of that product or service. User-centered research such as A/B testing finds a natural fit within the model of perpetual beta, where a culture of user-driven revision helps create products and services that meet current user expectations.

In its overall goal of providing insights into user behaviors, A/B testing is similar to other widely-practiced UX research methodologies such as usability testing and heuristics evaluation. The essential value and distinguishing characteristic of A/B testing is its ability to provide quantitative user feedback for known UX problems. Whereas usability testing is designed to reveal previously-unknown UX problems, A/B testing is designed to reveal the optimal solution from among a set of alternative solutions to an already-known UX problem. Since the approach is most suitable for research contexts in which a specific design question can be predetermined, the question-answer structure that defines A/B testing also defines the boundaries of its usefulness. A/B testing is most effective when a clear design inquiry can be formulated in tandem with quantitatively measurable results. In this way, A/B testing can serve as an integral component to a comprehensive UX research program to collect user data and to design for user experiences that meet user expectations. The case study presented in this paper demonstrates the technique as applied to a library web design problem.

Literature Review

Much of the existing research that explores the statistical structures associated with A/B testing can be found within computer science literature, with specific investigation of sampling techniques, randomization algorithms, assignment methods, and raw data collection (Cochran, 1977; Cox, 2000; Johnson, 2002; Kohavi 2007, 2009; Borodovsky, 2011). The concept of A/B testing has also been present in business marketing literature for several years, with a strong focus on e-commerce goals such increasing click-through rates of ads and conversion rate of customers (Arento, 2010; McGlaughlin, 2006; New Media Age, 2010). A wide-ranging investigation of the UX of commercial websites is also represented in the business literature. This pursuit is couched in the business terms of return-on-investment, customer conversions, and consumer behavior, with a view towards the usability of online commercial transactions (Wang, 2007; Casaló, 2010; Lee S., 2010; Finstad, 2010; Fernandez 2011; Tatari, 2011; Lee Y., 2012; Belanche, 2012). While librarians may not focus so intently on the practice of e-commerce, the substantial user-focused research found in the business literature is instructive for understanding online user behaviors in general.

The library science literature offers no substantial discussion of A/B testing. Commonalities with computer science and business marketing literature can be found in the shared recognition of the value of user feedback and the objective of designing for user experiences that meet user expectations. The library literature offers extensive discussion of UX and user-centered design for a range of library products and services, including websites (Bordac 2008; Kasperek, 2011; Swanson, 2011), library subject guides (Sonsteby, 2013), and search & web-scale discovery (Gross, 2011; Lown, 2013). In 2008 S. Liu (14) offered a straightforward recommendation for library user experience online, "future academic library Web sites should...respond to users’ changing needs." Within libraries, usability studies have been the dominant method for accessing user perspectives and for understanding user behaviors and expectations. Other methods include ethnographic studies (Wu, 2011; Khoo, 2012; Connaway, 2013), eye tracking (Lorigo 2008; Höchstötter, 2009), and data mining and bibliomining (Hajek, 2012). The thrust of these studies converges around an understanding of UX research fundamentally guided by three questions: What do users think they will get? What do users actually get? How do they feel about that? The A/B testing process, when used in concert with these other methodologies, can serve as an integral component of a UX research program by introducing a mechanism for identifying the best answer from among a range of alternative answers to a UX question.

A/B Methodology & Case Study

The A/B testing process follows an adapted version of the scientific method, in which a hypothesis is made and then tested for validity through the presentation of design variations to the user. The testing process then measures the differences in user reactions to the variations using predefined metrics. For the experiment design to be effective, the central research question must be answerable and the results must be measurable. A successful framework for an A/B test follows these steps:

- Define a research question

- Refine the question with user interviews

- Formulate a hypothesis, identify appropriate tools, and define test metrics

- Set up and run experiment

- Collect data and analyze results

- Share results and make decision

These six steps comprise the A/B testing process and together provide the experiment design with clarity and direction. The case study presented below follows a line of inquiry around a library website and employs web analytics software to build measurable data that answers the initial research question. While the focus of the case study is a website, the essential principles and process of A/B testing may be applied in any context characterized by a known UX problem, a definite design question, and relevant measurable user data.

Step 1: Define a research question

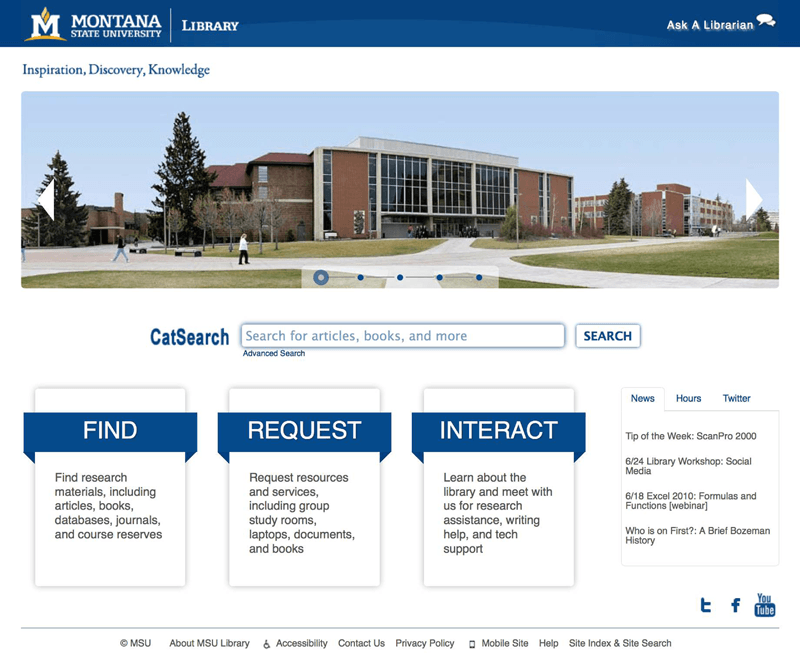

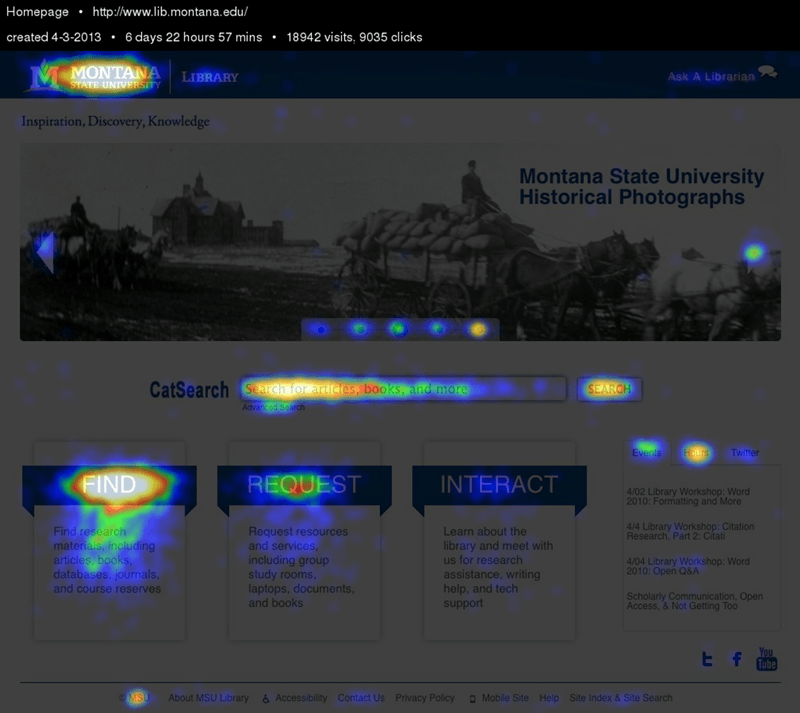

This crucial first step sets a course for the A/B test by identifying an general line of inquiry for the experiment. The research question is shaped from a known UX problem, drawing on existing user feedback such as that derived from surveys, interviews, focus groups, information architecture tree testing, paper prototyping, usability testing, or website analytics. In the example of this case study, a research question was developed from user feedback received through website analytics. Following a review of the website analytics for the library’s homepage in the spring of 2013, it was apparent that the Interact category of content on the web page was neglected by users.

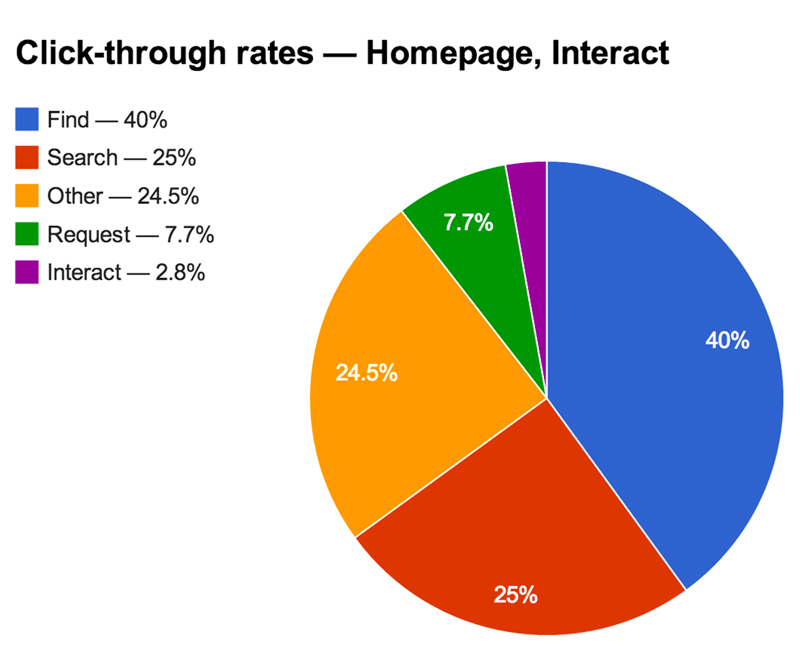

During the sample period from April 3, 2013 - April 10, 2013, which included 10,819 visits to the library homepage[3], there was a large disparity among the three main content categories. The click-through rate[4] for Find was 35%, Request was 6%, and Interact was 2%. This observation prompted a question: "Why are Interact clicks so low?" At this time the content beneath Interact included links to Reference Services, Instruction Services, Subject Liaisons, Writing Center, About, Staff Directory, Library FAQ, Give to the Library, and Floor Maps. The library’s web committee surmised that introducing this category with the abstract term "Interact" added difficulty and confusion for users trying to navigate into the library website homepage. Four different category titles were then proposed as variations to be tested: Connect, Learn, Help, and Services.

Step 2: Refine the question with user interviews

Interviews with users regarding the different variations can help refine the A/B test. This qualitative step serves as a small-scale pre-test to confirm that the experiment variations are different enough that feedback through the A/B test itself will lead to meaningful results. Variations that are too similar will be problematic for the experiment design by not allowing for a clear winner to emerge. For the purposes of this case study, ad hoc conversations with 3 undergraduate students were conducted with a guerilla-style approach.[5] Questions were designed to provide insight into the user expectations of the library homepage’s category titles and category page. They included:

- Have you previously clicked on Interact?

- What content do you expect to see after you select Interact?

- Does Interact accurately describe the content that you find after selecting Interact?

- Which word best describes this category? Interact? Connect? Learn? Help? Services?

Below are selected excerpts from student responses:

Sophomore student:

- "I didn't know that 'About' was under Interact.'"

- "Learn doesn't work.”

- "Connect is too vague and too close to Interact."

- "Services is more accurate. Help is stronger.”

- "Floor maps seem odd here."

- In order of preferences of the choices, this student responded: Help, Services, Interact, Connect, Learn

Junior student:

- "I am not a native English speaker, so I look for strong words. I look for help, so Help is the best, then Services too."

Senior student:

- "I've never felt the need to click on Interact. What am I interacting with? I guess the library?"

- "I never knew floor maps were there, but I have wondered before where certain rooms were."

- "Help makes sense. When I'm in the library, and I think I need help, it would at least get me to click there to find out what sort of help there is."

- "Services also works."

- "Learn doesn't really work. I just think, What am I learning? I think of reading a book or something."

- "Connect is better than Interact, but neither are very good."

- In order of preferences of the choices, this student responded: Help, Services, Connect, Interact, Learn

From these brief interviews, insights about the expectations of users come into focus. These interviews indicated that the experiment variables—Interact, Connect, Learn, Help, Services—were likely to provide meaningful differences during the experimentation period and that either Help or Services was likely be the highest performing variation. While these users indicated that the five different options would be adequately distinct for this test, users may instead indicate that the experiment variations are too alike. Users might also suggest their own unique variations that could be included in the experiment design. In either of these cases, the UX researcher may wish to further refine the variables and repeat this step.

Step 3: Formulate a hypothesis, identify appropriate tools, and define test metrics

As a counterpart to the general UX research question in Step 1, the hypothesis of Step 3 proposes a specific answer that can be measured with metrics relevant to that question. The hypothesis provides a focal point for the research question by precisely expressing the expected user reactions to the experiment variations. With the combined data from web analytics and user interviews for this case study, the library web committee formed the following experiment hypothesis: a homepage with Help or Services will generate increased website engagement compared to Learn, Connect, and Interact. With research question centered around this hypothesis, metrics relevant to website engagement must be identified that will enable the hypothesis to be tested for validity.

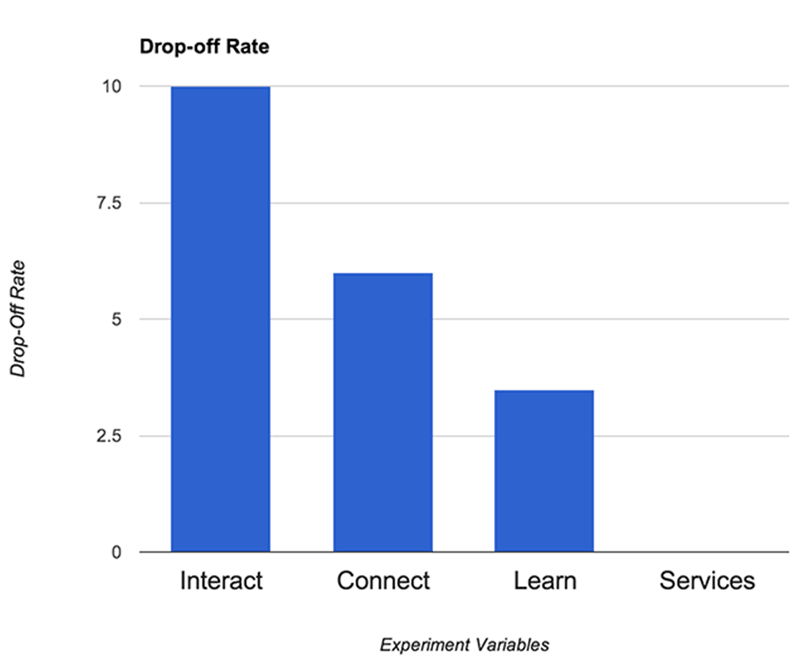

As this case study centers on a website-based A/B test, the experiment was conducted using the web analytics software Google Analytics and Crazy Egg.[6] The case study objective was to understand which category title communicated its contents most clearly to users and which category title resulted in users following through further into the library website. Three key web-based metrics were consequently used for this experiment: click-through rate for the homepage, drop-off rate for the category pages, and homepage-return rate for the category pages. Click-through rate was selected as a measure of the initial ability of the category title to attract users. Drop-off rate, which is available through the Google Analytics Users Flow and indicates the percentage of users who leave the site from a given page, was selected as a measure of the ability of the category page to meet user expectations. Homepage-return rate is a custom metric formulated for this experiment. This metric, also available through the Google Analytics Users Flow, measures the percentage of users who navigated from the library homepage to the category page, then returned back to the homepage. This sequence of actions provides clues as to whether a user discovered the desired option on the category page; if not, the user would likely then return to the homepage to continue navigation. Homepage-return rate was therefore selected as a measure of the ability of the category page to meet user expectations. The combined feedback from these three metrics provides multiple points of view into user behavior that together will help validate the hypothesis.

Step 4: Set up and run experiment

A/B tests operate most quickly and efficiently when variations reflect a subtle and iterative design progression. Experiments that intend to test stark design differences, on the other hand, may result in a diminished user experience and a less efficient testing process. Consider for instance an A/B test that seeks to find the optimal layout of a web page. If the experiment were to include significant design changes, then users who encountered different variations might become confused, page navigation would become difficult, and the user experience would likely suffer. For A/B tests that feature such stark differences, the experiment designers may wish to include less than 100% of users so as to reduce the risk of user confusion. With tests that feature more finely-drawn design changes, users are likely to experience only minimal disruption during the course of the experiment. For A/B tests that feature minor differences, the experiment designers may wish to include 100% of users. Tests that reflect iterative design changes and that include all users will lead to faster results, as a greater number of users included in the experiment will yield more significant results in a shorter period of time.

Since the test variables in this case study were largely non-disruptive, 100% of website visitors were included for a duration of three weeks, from May 29, 2013 - June 18, 2013. Based on our typical web traffic, this period of time allowed us to collect enough data for a clear winner to emerge. The experiment was designed to ensure that each user visited a variation with an equal level of randomization and that each variation received an approximately equal number of user visits. Other A/B test designs may employ alternative sampling techniques, and the exact mechanics of an experiment design will vary according to the tools used. For example, Google Analytics offers an alternate experiment design known as multi-armed bandit, in which the randomization distribution is updated as the experiment progresses so that web traffic is increasingly directed towards higher-performing variations. This approach is often favored in e-commerce A/B tests where a profit calculation may determine that low-performing variations only be minimally served to users.

Google Analytics includes a client-side experimentation tool called Experiments, a powerful option that provides the randomization mechanics for website-based A/B testing.[7] Experiment settings within Google Analytics control the percentage of traffic to be included in the experiment and the time duration for the experiment.[8] As a supplement to Google Analytics, Crazy Egg collects user click data and generates tabular and visual reports of user behavior. While Crazy Egg itself is not required to run A/B tests, it was included in this case study to provide a fuller set of user click data than is available through Google Analytics alone.

With the case study hypothesis formulated, metrics defined, and relevant tools identified, the web committee created variation pages and the experiment was set up using Google Analytics and Crazy Egg. Five different variations of the library homepage ran concurrently on the website, each served randomly and in real-time to website visitors using Google Analytics. The original page and each variation were given sequential names and were located within the same directory.

| Experimentation Name | Category Title | URL |

|---|---|---|

| Control | Interact | www.lib.montana.edu/index.php |

| Variation 1 | Connect | www.lib.montana.edu/index2.php |

| Variation 2 | Learn | www.lib.montana.edu/index3.php |

| Variation 3 | Help | www.lib.montana.edu/index4.php |

| Variation 4 | Services | www.lib.montana.edu/index5.php |

Step 5: Collect data and analyze results

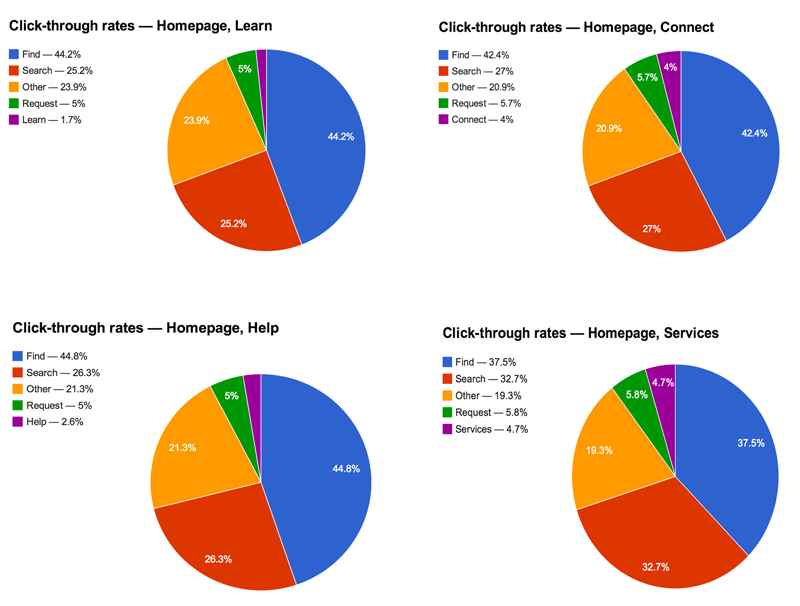

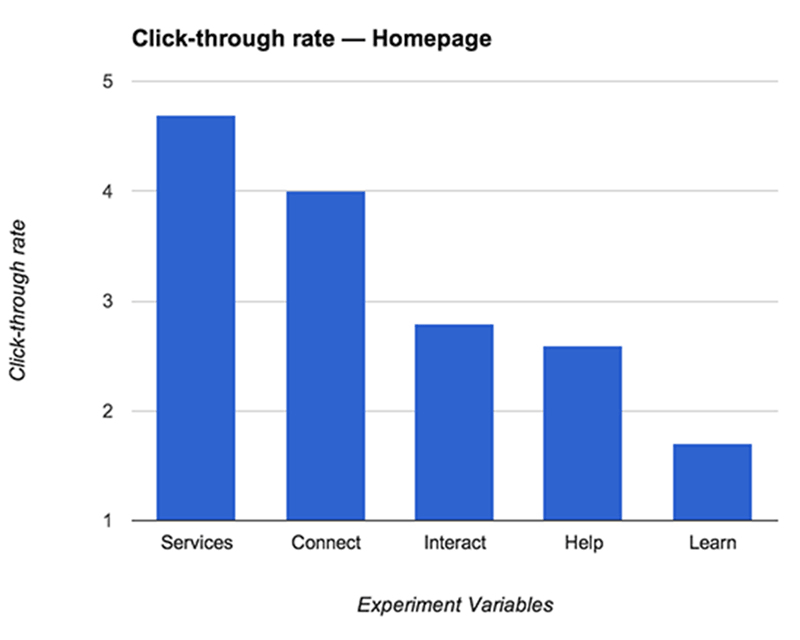

With the hypothesis defined, metrics identified, and experiment launched, the test itself can run its course for its defined duration. Experiment visits for this case study reflected a typical number of visits for this time period, and at the end of the three-week experiment duration, data for the three key metrics were analyzed. Click-through rate analysis was organized into five categories according to the primary actions of the library homepage: clicks into Search, clicks into Find, clicks into Request, clicks into the variable category title, and clicks into Other, defined as all other entry points on the page.

“Variation 4 - Services” was the highest performing across Click-through rate, Drop-off rate, and Homepage-return rate.[9] This variation performed exceptionally well in Drop-off rate and Homepage-return rate. During the experiment period, of those visitors to this variation who clicked into the category page, 0% returned to the homepage and 0% dropped off the page. These unusually low figures would likely not be replicated over the long-term. When evaluated comparatively, however, “Variation 4 - Services” emerged as the most successful variation according to the experiment metrics. When these results were combined with the user interview responses from Step 2, which indicated that “Variation 4 - Services” or “Variation 3 - Help” would be the most successful variation, “Variation 4 - Services” was identified as the overall highest-performing variation.

While the experiment described in this case study produced a successful test, not every test will produce a clear winner. Ambiguous outcomes may result from poor experiment design or a misconfigured test set up. In cases where no apparent winner emerges, the UX researcher can follow the A/B testing steps to refocus the research question, rework the design variables, or retest the variations with an altered experiment design. If a process of refinement continues to produce no meaningful results, the central research question may be more constructively approached from an alternative UX research perspective.

Step 6: Share results and make decision

An essential final step in the A/B testing process is to convert experiment data into meaningful results for peers and decision-makers. The UX researcher is tasked with communicating the A/B testing process so that the hypothesis, metrics, and results are meaningful in the context of user experience. Data visualization tools such as Crazy Egg, for example, allow complex click data to be presented compellingly for non-technical colleagues and co-workers. With user data collected and evaluated for this case study, the report went forward to the library web committee that more users clicked through and followed further into the website when the category title was named Services. As a result of this A/B test, the library homepage design was changed to include the Services category title.

Discussion

The A/B testing process provides a structure to ask and answer UX questions about library products and services. User behavior insights from this process can be unexpected, and it is crucial that unexpected results remain welcome during the A/B process. In early discussions regarding the experiment detailed in this paper, members of the library web committee favored Learn, the category title that later resulted in the lowest-performing variation during the experiment period. Had A/B testing not been introduced to these design discussions, decision-making regarding the category title would have suffered for its lack of direct user feedback. This example A/B test allowed the library to gather quantitative user data by testing 5 different variations of the website homepage simultaneously. The highest-performing variation improved the library website homepage by creating a user experience that meets user expectations to a greater degree than the previous version of the homepage, as measured by click-through rate, drop-off rate, and homepage-return rate.

The case study presented in this paper describes one website-focused application of A/B testing. The central principles of user-centered experimentation may be applied in creative ways throughout a library’s range of products and services. For example, a library may wish to test the language or design of its email newsletters to improve subscriber engagement and event attendance. Digital signage may also be a subject of A/B testing in a way that reveals the optimal design for gaining the attention of users and communicating relevant information. Mobile app notifications could also be tested with an experiment design that evaluates the effectiveness of various notification messages in generating app activity.

While the A/B testing process offers a powerful and flexible research technique, limitations exist that must be considered. When employed in isolation, A/B testing can lead to incomplete conclusions. Consider a UX research initiative that uses only A/B testing in evaluating the effectiveness of a call-to-action button in a web application. In a narrowly-focused experiment that examines the design of the button and uses click-through rate as the metric, results may skew in favor of a bright or otherwise conspicuous button variation at the expense of other UX problems such as poor information architecture or confusing web copy. UX research into this call-to-action button will benefit from multiple and varied approaches that can together provide a wider scope for the UX problem. As a quantitative evaluation of user behavior, A/B testing itself does not offer qualitative insights that would reveal the purpose or reason of user behaviors. The fundamental value of A/B testing lies in its ability to provide quantitative user insights into known UX problems, problems which themselves must be identified through alternative UX research methodologies.

For these reasons it is necessary to ground experimentation in a wider context by integrating A/B testing into a comprehensive UX research program. Controlled experimentation is most effective in providing user data in complement to additional UX research methods that may include usability studies, paper prototyping, ethnographic studies, data mining, and eye tracking. An A/B test for instance may indicate that users prefer certain library workshop descriptions within a promotional email, as measured by inbound web traffic and subsequent workshop attendance. Usability studies may then further reveal that users prefer those workshops due to the style of description but also because the topics are particularly relevant or interesting. These alternative UX research methods respond to the limitations of A/B testing and together constitute an effective constellation of UX research techniques.

UX research reaches its full potential when quantitative and qualitative methodologies are combined to provide a multi-faceted view of user behaviors, perspectives, and expectations. Such varied UX research programs help realize the concept of perpetual beta, where the design of products and services undergoes a recurring user-centered process of building, testing, analyzing, and iterating. The A/B testing process described in this paper provides one element of a UX research framework that allows for decision-making that is iterative and guided by user behaviors. In sum, A/B testing proved to be an effective method for collecting user data and improving library user experience by offering a method for answering UX questions. The A/B testing process ultimately represents a valuable form of observation, where known UX issues are productively informed by those users who interact directly with the library’s products and services.

References

- Allen, J., & Chudley, J. (2012). Smashing UX design: Foundations for designing online user experiences (Vol. 34). John Wiley & Sons.

- Arento, T. A/B testing in improving conversion on a website: Case: Sanoma Entertainment Oy. (BA Thesis). Available from Theseus.fi database (URN: NBN: fi: Bachelor's 201003235880).

- Atterer, R., Wnuk, M., & Schmidt, A. (2006, May). Knowing the user's every move: user activity tracking for website usability evaluation and implicit interaction. In Proceedings of the 15th international conference on World Wide Web (pp. 203-212). ACM.

- Belanche, D., Casaló, L. V., & Guinalíu, M. (2012). Website usability, consumer satisfaction and the intention to use a website: the moderating effect of perceived risk. Journal of retailing and consumer services, 19(1), 124-132. doi:10.1016/j.jretconser.2011.11.001.

- Bordac, S., & Rainwater, J. (2008). User-centered design in practice: the Brown University experience. Journal of Web Librarianship, 2(2-3), 109-138.

- Borodovsky, S., & Rosset, S. (2011, December). A/B testing at SweetIM: The importance of proper statistical analysis. In Data Mining Workshops (ICDMW), 2011 IEEE 11th International Conference on (pp. 733-740). IEEE.

- Brooks, P., & Hestnes, B. (2010). User measures of quality of experience: why being objective and quantitative is important. Network, IEEE, 24(2), 8-13.

- Casaló, L. V., Flavián, C., & Guinalíu, M. (2010). Generating trust and satisfaction in e-services: the impact of usability on consumer behavior. Journal of Relationship Marketing, 9(4), 247-263. doi:10.1080/15332667.2010.522487.

- Cochran, W. G. (1977). Sampling techniques. New York: Wiley.

- Cox, D. R., & Reid, N. (2000). The theory of the design of experiments. New York: CRC Press.

- Connaway, L. S., Hood, E. M., Lanclos, D., White, D., & Le Cornu, A. (2013). User-centered decision making: a new model for developing academic library services and systems. IFLA journal, 39(1), 20-29.

- Deininger, R. (1960). Human factors engineering studies of the design and use of pushbutton telephone sets. Bell System Technical Journal, 39(4), 995-1012.

- Fernandez, A., Insfran, E., & Abrahão, S. (2011). Usability evaluation methods for the web: A systematic mapping study. Information and Software Technology,53(8), 789-817. doi:10.1016/j.infsof.2011.02.007

- Finstad, K. (2010). The usability metric for user experience. Interacting with Computers, 22(5), 323-327. doi:10.1016/j.intcom.2010.04.004

- Gross, J., & Sheridan, L. (2011). Web scale discovery: The user experience. New library world, 112(5/6), 236-247. doi:10.1108/03074801111136275.

- Hájek, P, & Stejskal, J. (2012). Analysis of user behavior in a public library using bibliomining. In Oprisan, S., Zaharim, A., Eslamian, S., Jian, MS, Aiub, CA and Azami, A. (Eds.), Advances in Environment, Computational Chemistry and Bioscience (pp. 339-344). WSEAS Press, Montreux, Switzerland. Available at: http://www.wseas.us/e-library/conferences/2012/Montreux/BIOCHEMENV/BIOCHEMENV-53.pdf.

- Herman, J. (2004, April). A process for creating the business case for user experience projects. In CHI'04 extended abstracts on Human factors in computing systems (pp. 1413-1416). ACM.

- Höchstötter, N., & Lewandowski, D. (2009). What users see–Structures in search engine results pages. Information Sciences, 179(12), 1796-1812.

- Johnson, R. A., & Wichern, D. W. (2002). Applied multivariate statistical analysis (Vol. 5, No. 8). Upper Saddle River, NJ: Prentice Hall.

- Kasperek, S., Dorney, E., Williams, B., & O’Brien, M. (2011). A use of space: The unintended messages of academic library web sites. Journal of Web Librarianship, 5(3), 220-248.

- Khoo, M., Rozaklis, L., & Hall, C. (2012). “A survey of the use of ethnographic methods in the study of libraries and library users." Library & Information Science Research 34(2), 82-91.

- Kohavi, R., Henne, R. M., & Sommerfield, D. (2007, August). Practical guide to controlled experiments on the web: Listen to your customers not to the hippo. In Proceedings of the 13th ACM SIGKDD international conference on Knowledge discovery and data mining (pp. 959-967). ACM.

- Kohavi, R., Longbotham, R., Sommerfield, D., & Henne, R. M. (2009). Controlled experiments on the web: survey and practical guide. Data Mining and Knowledge Discovery, 18(1), 140-181. doi:10.1007/s10618-008-0114-1.

- Kohavi, R., Deng, A., Frasca, B., Longbotham, R., Walker, T., & Xu, Y. (2012, August). Trustworthy online controlled experiments: Five puzzling outcomes explained. In Proceedings of the 18th ACM SIGKDD international conference on Knowledge discovery and data mining (pp. 786-794). ACM.

- Lee, S., & Koubek, R. J. (2010). Understanding user preferences based on usability and aesthetics before and after actual use. Interacting with Computers, 22(6), 530-543. doi:10.1016/j.intcom.2010.05.002.

- Lee, Y., & Kozar, K. A. (2012). Understanding of website usability: Specifying and measuring constructs and their relationships. Decision Support Systems, 52(2), 450-463. doi:10.1016/j.dss.2011.10.004.

- Liu, S. (2008). Engaging users: the future of academic library web sites. College & Research Libraries, 69(1), 6-27.

- Lorigo, L., Haridasan, M., Brynjarsdóttir, H., Xia, L., Joachims, T., Gay, G., & Pan, B. (2008). Eye tracking and online search: Lessons learned and challenges ahead. Journal of the American Society for Information Science and Technology, 59(7), 1041-1052.

- Lown, C., Sierra, T., & Boyer, J. (2013). How users search the library from a single search box. College & Research Libraries, 74(3), 227-241.

- Marks, H. M. (2000). The progress of experiment: science and therapeutic reform in the United States, 1900-1990. Cambridge: Cambridge University Press.

- McGlaughlin, F., Alt, B., & Usborne, N. (2006). The power of small changes tested. Marketing Experiments. Retrieved from http://www.marketingexperiments.com/improving-website-conversion/power-small-change.html.

- Nielsen, J. (1994). Guerrilla HCI: Using discount usability engineering to penetrate the intimidation barrier. Cost-justifying usability, 245-272.

- Nguyen, T. D., & Nguyen, T. T. (2011). An examination of selected marketing mix elements and brand relationship quality in transition economies: Evidence from Vietnam. Journal of Relationship Marketing, 10(1), 43-56.

- O'Reilly, T. (2009). What is web 2.0. O'Reilly Media, Inc.

- Ouellette, D. (2011). Subject guides in academic libraries: A user-centred study of uses and perceptions/Les guides par sujets dans les bibliothèques académiques: Une étude des utilisations et des perceptions centrée sur l'utilisateur. Canadian Journal of Information and Library Science, 35(4), 436-451.

- Rossi, P. H., & Lipsey, M. W. (2004). Evaluation: A systematic approach. Sage.

- Sonsteby, A., & DeJonghe, J. (2013). Usability testing, user-centered design, and LibGuides subject guides: A case study. Journal of Web Librarianship, 7(1), 83-94. doi:10.1080/19322909.2013.747366.

- Swanson, T. A., & Green, J. (2011). Why we are not Google: Lessons from a library web site usability study. The Journal of Academic Librarianship, 37(3), 222-229. doi:10.1016/j.acalib.2011.02.014.

- Stitz, T., Laster, S., Bove, F. J., & Wise, C. (2011). A path to providing user-centered subject guides. Internet Reference Services Quarterly, 16(4), 183-198. doi: 10.1080/10875301.2011.621819.

- Tatari, K., Ur-Rehman, S., & Ur-Rehman, W. (2011). Transforming web usability data into web usability information using information architecture concepts & tools. Interdisciplinary Journal of Contemporary Research in Business, 3(4), 703–718.

- Tang, D., Agarwal, A., O'Brien, D., & Meyer, M. (2010, July). Overlapping experiment infrastructure: More, better, faster experimentation. In Proceedings of the 16th ACM SIGKDD international conference on Knowledge discovery and data mining (pp. 17-26). ACM.

- Visser, E. B., & Weideman, M. (2011). An empirical study on website usability elements and how they affect search engine optimisation. South African Journal of Information Management, 13(1). doi:10.4102/sajim.v13i1.428.

- Wang, J., & Senecal, S. (2007). Measuring perceived website usability. Journal of Internet Commerce, 6(4), 97–112.

- Wu, S. K., & Lanclos, D. (2011). Re-imagining the users' experience: An ethnographic approach to web usability and space design. Reference Services Review, 39(3), 369-389.

- Multivariate testing: A broad sample. (2010, February 4). New Media Age, 18. Retrieved from Academic OneFile.

Notes

http://mcfunley.com/design-for-continuous-experimentation; https://blog.twitter.com/2013/experiments-twitter; http://hbr.org/2007/10/the-institutional-yes/ar/1; http://googleblog.blogspot.com/2009/03/make-sense-of-your-site-tips-for.html.

For Google’s 10-point company philosophy: http://www.google.com/about/company/philosophy/.

This time period was chosen as a representative snapshot of library web traffic.

Click-through rate is defined as the number of users who visit a page divided by the number of users who click on a specific link, expressed as a percentage. For example, if a page receives 100 visits and a particular link on that page receives 1 click, then the click-through rate for that link would be 1%.

Such “guerilla” testing is an efficient and effective method for conducting rapid qualitative user experience research. See Nielsen (1994) and Allen & Chudley (2012, p.94) for a background and description of “guerilla” testing.

http://googleblog.blogspot.com/2009/03/make-sense-of-your-site-tips-for.html; https://support.google.com/analytics/answer/2844870.

For detailed descriptions of these settings, see https://support.google.com/analytics/answer/1745147?hl=en&ref_topic=1745207&rd=1; https://developers.google.com/analytics/devguides/platform/experiments-overview.

Due to a reporting limitation within Google Analytics, Help category data was incomplete.